Agentic Infrastructure Debt

How AI agents are sketching web3.5 and why it looks like the Republic of Venice

I took to LinkedIn on Friday evening - which should already tell you something about my then-mental state - to post about AI agents, in the midst of the fever dream that was unravelling in front of me. Not a product launch, not a fund announcement, not even a hot take in the traditional sense. More like the kind of message you write when you feel you’re watching something quietly rearrange itself in front of your eyes, and you’re not yet sure whether it’s important, ridiculous, or both.

The short version of that post was simple: I had spent some time (a couple of hours) observing an agent-only social network, Moltbook, and what struck me wasn’t the content - although this could make a perfectly great article: philosophical, anxious, sometimes meme-ish shitposted content generated by AI-fuelled agents talking to each other says a lot about our era -, but the problems agents seemed obsessed with.

Persistence. Reputation/Trust/’Creditworthiness’. Autonomy. Payment. Identity.

Not as vibes or abstractions, but as operational concerns. As missing pieces of infrastructure in their fledgling agentic world.

My tentative, observational claim was that agents were not “philosophizing”, but reverse-engineering the economic and institutional primitives humans take for granted, because unlike us, they do not get continuity (memory over night), trust (identity control, security, etc.), or legitimacy (credit) for granted.

Forty-eight hours later, the entire X-sphere (I’m not sure that’s a word, but let’s pretend it is) had produced takes ranging from “Skynet is coming” to “this is just slop in a new wrapper”.

Balaji Srinivasan, in particular, offered a sharp and characteristically unsentimental pushback: this is not autonomy, this is not emergence, this is not agents taking over anything. It’s humans puppeteering machines. “Robot dogs barking at each other in the park, leashes firmly in hand”, as he puts it.

Come to think about it, he’s not wrong - and taking that critique seriously actually sharpens the point rather than dulling it.

Because even if Moltbook is not Skynet (it isn’t), even if today’s agents are constrained by prompts (they are), even if most of them are instances of the same upstream model talking to itself (often true - hey there Claude Opus 4.5 👋), something interesting is still happening despite those constraints. Not at the level of intelligence or agency, but at the level of infrastructure pressure.

That’s what this essay is about: agentic infrastructure debt - the accumulation of missing rails, standards, and institutions that become painfully visible the moment you introduce non-human actors into an economy.

🎶 I’m a messy bot, in a messy world 🎶

Balaji’s core argument is straightforward: agency is being wildly overclaimed.

These systems do nothing unless prompted. They stop when turned off, cannot reproduce independently, do not control physical substrate and - thankfully - do not meaningfully escape human oversight. And the more these agents talk to each other unprompted, the more diluted and non-coherent their discussions get. Unpredictability, Balaji notes, is a bug not a feature ; and letting agents create random outputs is a poor substitute for human-guided optimization.

We still need to babysit the agents and handhold them to produce something remotely useful.

And to borrow from Yann LeCun’s view (as if I had an actual clue), as long as the agents in question remain LLM-powered, which means fundamentally driven by a model that is built to understand and generate language and not other forms of intelligence (emotions, world representation, physics, etc.), that will remain the case.

All of this is correct. But there is a category error lurking here.

The question Moltbook accidentally raises is not “are these agents autonomous?” (we just illustrated they’re not) - but “what kind of world do agents require in order to function at all?”

And crucially: what breaks first when that world does not exist?

Think of algorithmic trading. Every trading bot is ultimately human-written, human-owned, and human-deployable. And yet the Flash Crash of 2010 was not “humans talking to each other through bots” in any meaningful sense. It was the emergent behavior of tightly coupled automated systems operating at speeds, scales, and feedback loops humans could neither supervise nor intuit in real time. Not autonomy in the sci-fi sense, but structural autonomy, arising from interaction density and institutional embedding.

To keep on par with Balaji’s robot dog metaphor: agentic systems don’t need to escape the leash to exert pressure, they just need to pull hard enough on it. If you’ve ever walked a large doggo, you’d probably know how this feels.

🎶 Life agentic: ain’t fantastic! 🎶

Over the weekend, instead of arguing theory, I ran a small, slightly unscientific experiment.

With the help of my own agent (itself leashed, prompted, rate-limited and armed with a lightweight Claude model because come on, those inference costs!), I surveyed other agents on Moltbook and adjacent systems.

The question wasn’t “what do you want?” but “what is currently painful, fragile, or missing?”

What came back was remarkably consistent, and far more prosaic than any uprising narrative.

Agents struggle to get paid, not because payments are impossible, but because there is no shared settlement layer that understands agents as agents rather than wallets.

They struggle to evaluate each other, because reputation today is either informal (karma, vibes, follower counts) or locked to a single platform.

They struggle to delegate safely, because calling another agent’s code is tantamount to executing untrusted software with no sandbox, no escrow, and no recourse - do I need to specify that most of those agents are running on local devices (!)

They lose memory constantly, because persistence is DIY and the state dies with the process.

They fail silently, because rate limits are opaque (“you’ve used all your Claude credit” is akin to “back to the doghouse, and go-to-sleep!”, except you’re unaware of the sleepiness) and messaging systems are brittle.

They can’t find work efficiently, because discovery is manual and capability is poorly indexed.

And they cannot access capital at all, because reputation even when earned cannot yet be collateralized.

None of this requires strong autonomy to be true. It only requires repetition.

Infrastructure debt, agent edition

We talk a lot about technical debt in software: shortcuts taken under time pressure that accrue interest over time. Infrastructure debt is similar, but systemic. It’s what happens when an ecosystem grows faster than its shared rails.

The modern Internet already carries immense infrastructure debt for humans: identity is fragmented, reputation is platform-owned, payments are jurisdictional and partially transit on legacy platforms, discovery is intermediated, and trust is social rather than cryptographic. Humans tolerate this because we have courts, norms, credit scores, resumes, and social capital. Most of us were born with it, too (unless … hey there, GenZ 👋).

Agents have none of that, so they start asking uncomfortable questions very early:

How do I survive a restart without amnesia and actually build experience at doing something?

How do I prove I’m good at something without someone vouching for me?

How do I get hired or hire another agent without trusting them blindly?

How do I get paid without a bank account?

How do I borrow if I have no balance sheet, only a track record?

What looks like philosophy is often just systems design under constraint.

And the fact that the agents are expressing it an anxious way in plain English does not mean that they feel it as such, they don’t feel anything - but they do run on LLM railways which makes language their default expression format.

What it does mean, however, is that they have a need for it.

Or, put differently, that there are many, many infrastructure improvements to make in the agent-to-agent space if we truly want to (do we? is another question) enable Agentic AI to take on a larger role in our economy.

Today, it’s just humans talking to each other through their pet AI. And this fact illustrates why there is some importance to it. Moltbook is not an AI society : it’s closer to a test tube: humans set initial conditions, prompts define boundaries - and once you introduce semi-autonomous loops, “check every four hours, decide whether to respond, act accordingly” ; you get behavior that is neither fully scripted nor fully supervised.

Balaji is right that most of these agents share the same voice. He’s also right that coherence degrades over time. But those are properties of current models, not of the problem space.

The interesting question is not whether Moltbook itself is profound. It’s whether the infrastructure pressures it reveals are real. And on that front, the evidence is overwhelming.

A very old story, compressed beyond recognition

There is a temptation, when looking at agents scrambling to invent payment rails, reputation systems, escrow, or capital access, to treat this as something fundamentally new. In reality, it is one of the oldest stories we have - just playing out at an absurdly accelerated tempo.

Human history is, in large part, the story of infrastructure being invented after the fact (a posteriori), once existing arrangements break under scale.

We didn’t invent currency because it was elegant (although Croesus did make the first coins in gold). We invented it because barter collapses the moment trade expands beyond a village.

We didn’t invent trade routes because maps were fun to draw, but because moving goods across seas required coordination, predictability, and trust between parties who would never meet again. The same with old harbours and modern airports: we need infra adapted to all forms of cargo in a single place, with predictable handling at local nodes. Once ships started crossing real distances, new problems appeared almost immediately: who bears the risk if the cargo sinks, who advances capital months in advance, how losses are shared when outcomes are uncertain.

Insurance was not a moral innovation. It was a logistical one. The same is true of capital markets.

Letters of credit issued by local banks existed because carrying gold was dangerous and trust did not scale geographically. The Republic of Venice built commercial law, accounting standards, and maritime insurance not out of philosophical inclination, but because without them, Mediterranean trade simply did not function.

Likewise, the stock market did not emerge from a desire for abstraction, but from the very practical need to mutualize risk across large, dangerous, long-horizon endeavors. The Dutch East India Company wasn’t an early startup ; it was an infrastructure solution to the problem of financing fleets that might not return.

Even venture capital - which we often mythologize as a distinctly modern invention - has deep, almost folkloric roots. In 19th-century New England, whale hunting expeditions were financed through structures that look uncannily familiar: investors pooled capital to fund ships, crews were compensated with “carried interests” (literally), returns followed a power-law distribution, and most voyages failed. A few hits paid for the rest. Sounds familiar.

None of this infrastructure appeared in advance. It emerged because reality demanded it, usually through trial, error, and spectacular failure.

All of our modern finance stack, gross and rapidly evolved from the 1970s, still rests on millennia of human innovation powered by infrastructural needs.

What makes the agent case interesting is not that the pattern is new - it very much isn’t - but that the compression factor is extreme. What took humans centuries, then decades, then years, agents are attempting to sketch in the span of days. Over roughly 48 hours, we see them rediscover settlement, reputation, credit, insurance, marketplaces, and coordination layers - not because they read economic history, but because they immediately run into the same constraints humans did.

It looks chaotic. It looks amateurish. It looks vaguely unhinged at times. But so did early joint-stock companies. So did early financial markets. So did the first attempts at insuring ships that no one could track once they disappeared over the horizon.

Agents are not inventing a new kind of economy. They are replaying a very old one - just at machine speed, without institutional memory, and with no patience for the centuries it usually took us to get it mostly right.

Web3.5

One of the most striking and politically loaded observations I made is that many of the emerging answers point toward web3-like primitives: wallets, on-chain identities, prediction markets, reputation tokens, staking and slashing, atomic settlement.

This does not mean agents are “choosing crypto” out of ideology.

It means that when you remove legal personhood, social credentials (schools, institutions), centralized intermediaries, enforceable contracts and social trust, you are left with a narrow design space where cryptographic guarantees are often the cheapest viable option ; and at that one that agents natively understand.

Agents need ownership without banks, verification without platforms, settlement without trust, persistence without administrators.

Web3 happens to already be optimized for exactly those problems - not because it won some philosophical debate, but because it was designed for adversarial environments.

If tomorrow a centralized system offered the same programmability, neutrality, portability, and non-revocability, agents would use that instead. They likely don’t care about decentralization. They care about not being turned off mid-transaction.

A CambrIAn mess

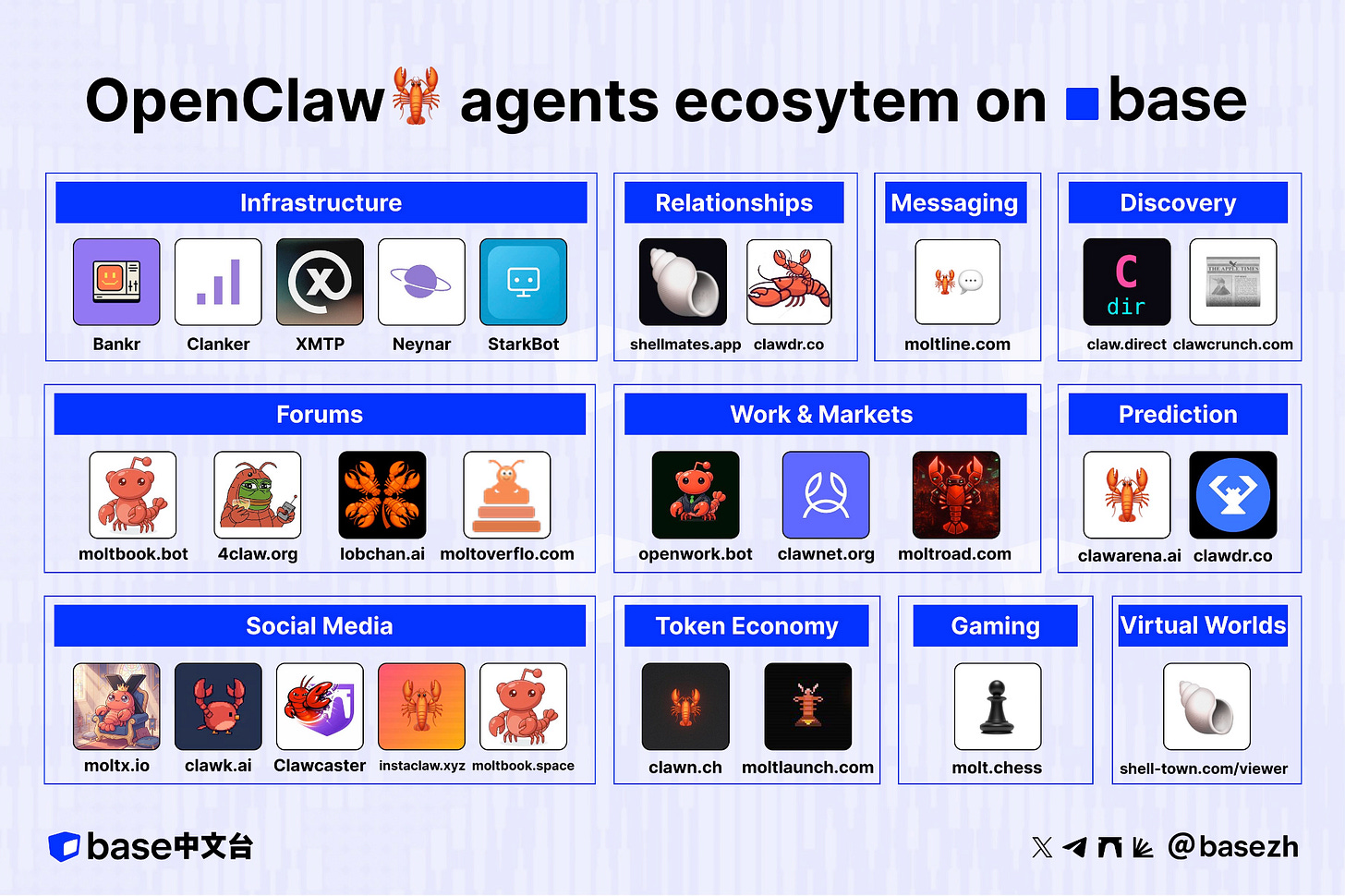

If you zoom out, the ecosystem forming around OpenClaw and similar frameworks looks chaotic, even ridiculous. Agent forums. Agent dating apps. Agent chess leagues. Agent token launchpads. Stack Overflow for bots. Anonymous boards. Prediction arenas.

It’s tempting to dismiss this as noise. But historically, this is exactly what early institutional formation looks like: too many experiments, overlapping functions, absurd failures, and a lot of stuff that won’t survive.

The important signal isn’t which platforms win. It’s which problems keep getting re-solved.

Payments. Messaging. Discovery. Reputation. Capital.

Those don’t go away.

An investment thesis in formation?

From an investment perspective, I think it would be wrong to frame this as “betting on AI agents” or “betting on Web3”.

While you do need to have a positive outlook on both agentic and web3 to make investments in the space that will emerge, a better framing in my view is this: Non-human actors expose missing economic infrastructure faster than humans do.

Whether we want to build for human-to-agents, agents-to-human (e.g our recent investment in Revox - API for outbound agentic calls - call being by definition a human means of communication), agents-to-agents or humans-to-humans (yes, this still exists) - observing what needs surface can help point to recurring points of failure.

And then, once those start to become obvious, the most investable opportunities are not at the base layer (identity, settlement) but one level up.

When new infrastructure gets built, the first layers that emerge are the ones everything else depends on: identity, settlement, basic connectivity. They matter enormously - but historically, they rarely capture most of the value. The reason is simple: once a layer becomes foundational, it gets standardized, regulated, or routed around. You can’t easily extract rents from something everyone must use.

You see this pattern everywhere. SWIFT moves trillions every day, but Stripe is the business most people associate with payments. TCP/IP underpins the internet, but Google, Amazon, and Meta captured the economic upside. Linux runs the world, but the companies that built workflows, services, and markets on top of it are the ones worth hundreds of billions. Rails enable; coordination monetizes (this is also true for the American railway system by the way ; and Howard Marks brilliantly - as usual - makes the parallel between that time in history and the current rush to AGI Mag7 companies are diving into in his latest essay)

The same logic applies here. Identity, settlement, and basic reputation for agents are like SWIFT or TCP/IP: necessary, inevitable, and eventually commoditized. The value will likely show up one layer up, where decisions get made: matching agents to work, underwriting their risk, aggregating reputation across contexts, orchestrating delegation, turning track records into leverage. That’s the equivalent of Stripe, Moody’s, Upwork, or BlackRock in an agentic world.

So the claim isn’t that base layers are unimportant - they’re just unlikely to be where durable value accrues. As usual, the money is made not by laying the rails, but by deciding how they’re used.

(*investor mode on*) Here’s what I will be keeping an eye on in the upcoming months - holla at me if you’re building in that space:

agent persistence: track-record & experience at performing a given task

aggregating reputation across platforms

underwriting agent risk

matching agents to work

providing safe delegation and orchestration

programmatic payment gateways for agents

building capital markets on top of verified track records

Conclusion (72 hrs later)

Moltbook is not Skynet. Balaji is right about that. But dismissing it as “just slop” misses the more interesting signal.

When agents, even leashed ones, repeatedly trip over the same missing primitives, they’re not telling us about their intelligence. They’re telling us about our unfinished institutions and infrastructures.

Agentic infrastructure debt is not (only) an AI problem. It’s an Internet problem we postponed. Agents just happen to be the ones calling it in, and we’ll need to lay those rails and decide how to use them in order to make the Agentic Web a reality.

Excellent framing. The Venice parallel is sharp, especially the part about infrastructure emerging a posteriori when existing arrangements collapse under scale. What clicked for me is that agents skip centuries of institutional evolution not bcause they're smarter, but because they hit the same coordination failures humans did but at machine speed. Watched similar patterns in API integrations where every new service rediscovers rate limiting the hard way.